I’m currently writing a “journey” about breakups for the app I work for. That might not sound like much fun, but it is. This is partially because it’s a “relatable af” problem, which means I’m writing for an eager, often grateful audience. It’s also fun because the neuroscientific research about breakups is absolutely wild.

For example, I recently learned:

The parts of the brain responsible for forming judgments of, and feeling afraid of, people shut down when they begin to fall in love. If you’re falling for someone, you cannot judge the other person properly. You don’t have access to the necessary equipment. Oof.

We store negative memories in a more fragmented manner, so after a breakup, it’s literally harder to access memories of the bad times compared to the good ones, which encourages nostalgia.

As I convey this to my readers, I can’t help but acknowledge that these are comical and also terrifying facts. Many people ruin their lives before and during breakups, wasting years and opportunities, becoming their worst selves, acting against their deepest values. Reading these studies alongside the literature on how those going through a breakup are not dissimilar to those going through drug withdrawal, it’s easiest to conclude that our brains are in some very concrete sense working against us.

Worse, there’s not much we can do about it. Sure, we can try to arrange our lives so our brains don’t dictate our actions. But none of that much changes what I’ve outlined above about our internal experience. Even if we do all the right things in this relatable breakup scenario, for some time we’ll still be divorced emotionally from the reality of our own lives, unable to really feel why our ex wasn’t the right person (or was downright dangerous, in some unfortunate scenarios). We’ll still be unable to properly remember what happened, even though we were there. No wonder it's sometimes scary to watch this sort of thing from the outside; no wonder we despair as our friends get-back-together when they should not, and agonise in cases of abuse.

Our brains (and with them, our selves) are driven by their own often-destructive endemic logics. It’s not a “home invasion” of any sort or an external influence that’s to blame; nor is a glitch, an error. It’s not even that we have imbibed a faulty set of ideas that could be argued against or otherwise displaced. The problem is affective and non-rational, and the danger is coming from inside the house.

I’m not trying to overdramatise here. I study cognitive science, social psychology, and neuroscience in my work, but I do so from outside the formal scientific discipline. (My PhD was about the political implications of psychological phenomena, yes, but conducted in a politics department.) Ever conscious of this, I try to be very careful about how I understand and employ scientific research. I do my best to find studies that show the opposite of my other sources. I long for (and mostly don’t find) pre-registration. I’ve learned all about p-values. I question the constructs, I distrust the DSM. I worry about the problem of attributing behaviours to any one mechanism. For my upcoming book, I’ve carefully written or called the major researchers behind what I cite, to check my understanding and compare my thinking. I always assume I know less than I think I do. I also keep in mind that “science” is regularly wrong.

With all that in mind, I have come to this certainty about the brain:

It’s frightening to have one, to be one.

The scariness of what the brain is really doing day to day comes to mind regularly because there are ongoing debates about how to write about and metaphorise cognitive science. For example, since we’re living through a fairly technophilic time, the brain often gets compared to a computer, and people in fields surrounding mine debate how good a comparison that is. (It’s a bad one). Our brains actually don’t do many of the things that computers do; one particularly relevant example is that they do not really store information. Instead, they often recreate a memory each time they revisit it, generally with very poor accuracy, especially if bad things have happened (see above breakup point number two). All this leads to extremely faulty memories that will often feel unquestionably true. This is relevant not only for breakups, but also for designing learning experiences as I sometimes do, and for understanding why eyewitness testimony isn’t trustworthy. It's also relevant because it means that the fantasy that we can upload our brains to a computer and live forever, or otherwise store our “selves” in any way, is faulty at its very premise, in some important sense. We are not really “stored” in there at all.1

Another example of the frightening aspects of the brain: in my next big book, I’ll write about social atrophy, the way that parts of the brain essentially wither away when we’re not regularly in social contact with others. This has a profound effect on anyone who experiences it (making us more exhausted by social interactions, for example). It has a truly devastating effects on older people, who (sorry to be blunt) become demented and die more quickly. Social atrophy also causes people to interpret neutral social cues in negative ways and thus become suspicious and paranoid. This, in turn, further alienates the socially-atrophied person from those around them, creating a negative spiral. Our brains are fragile like delicate plants, shriveling as the result of even “mild” neglect.

Even when it comes to parts of life deemed good and healthy, there’s a quietly ruthless logic at work in the way the brain operates. Consider how the brain regularly cannibalises one set of abilities in order to expand another. One example is how, when we learn to read, the brain overwrites some of the area devoted to facial recognition, instead employing it to visually recognise words.2 When we teach our kids to read, we also (unavoidably) erase some of their ability for facial recognition.

.

And then, of course, there’s the incredible emotional and perceptive fragility of the brain, all the other profound suffering that lies under the culturally-constructed umbrella term of “mental health.” Our brains can go straight to making us anxious without anything for that anxiety to attach to, they can make minor interactions feel horrific, they can give us seizures, hallucinations, dementia, they can make us hate and kill ourselves, they can make us hate the people who love us. None of this involves our consent. A gothic novel has nothing on the eerieness, the agony, the straight-up horror of being brain. It is a nightmare, sometimes (and indeed, it is what makes nightmares).

Speaking of: a new-ish bit of research suggests that dreaming might have evolved to prevent our brain from overwriting the visual cortex as we sleep and thus blinding us. For while we sleep, the brain in its incredible ravenous malleability reworks and rewrites (for lack of better metaphors) to get the brain ready for the next day. This is mostly useful, but it poses a problem for animals that sleep in darkness. Within an hour of a lack of visual stimulation, the part of the brain responsible for processing touch, which lies adjacent to the visual cortex (and is still experiencing stimulus even in the dark) begins to encroach upon the visual cortex, reworking visual cortex neurons for its own touch-related purposes. Without the visual hallucinations of dreams to keep our visual cortex firing, this research suggests, we might lose the part of the brain we need for sight altogether. That is why dreams are primarily visual phenomena: we still hear and feel and smell and even sort of taste things when we sleep in darkness, but we need a visual hallucination to hold that neural territory and keep the brain from self-blinding.

Perhaps by now I’ve made my point about the horrors of the brain (though there are many more of these striking examples, which may form another post at some point). In short, what the brain is “up to” is far weirder and darker than the cheerful covers of the books in the pop psychology section. I do not think this is primarily to do with the intelligence of anyone involved in creating or reading these books. It’s not even to do with the pressures of capitalism on the publishing industry, which primarily commissions books about psychology that are either geared towards the mental-health end of self help (where being reassuring is somewhat required) or the self-improvement end (which often uses the cheerful peppy language of the corporate world and follows the logic of self-optimisation). It’s true that neither of these genres tends to leave much room for authors or readers to marvel at the frightening, amazing, bonkers weirdness of the brain. But even this commercial pressure can’t fully explain why so little of the creepiness of the brain gets written about.

No, I suspect the matter is deeper than that, and probably has to do with fear: few of us would like to consider the horrors of the brain directly.

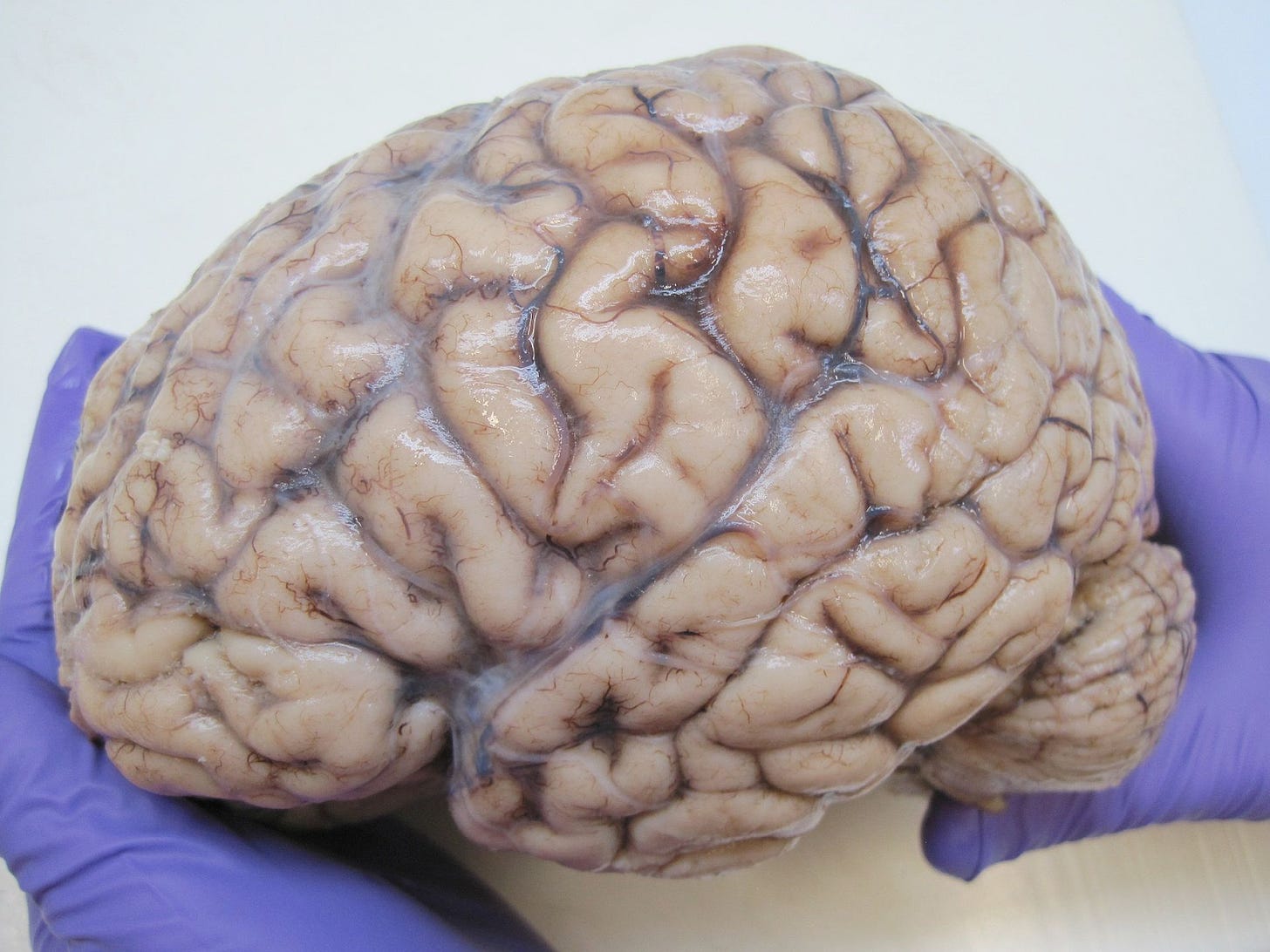

One day in my first ever psychology course, the professor came to class with a cooler. He reached in with plastic gloves and lifted out a gray mass. This is it, he told us, just a kilo or so, gelatinous, that lies between your ears: this is you, all your thoughts and dreams, all your desires and experiences, all your big ideas and deepest loves, this is where they are made, or hit their mark.

I recall the girl next to me shuddering.

Don’t get me wrong, I love being alive; I’m grateful, gloriously so, for my time generated on a shifting writhing dying set of neurons. And I believe that cognition is embodied and extended too, that some of what it means to think happens only in connection with the world around us, especially with other people. I think that when we die, part of us lives on in this way.

But for the most part, that doesn’t make the horror of living as a bunch of neurons any less vertigo-inducing. All the metaphors about computers, all hopes of human correct-ability, all the neuralink, therapy, medication, meditation, transfusions, cryopreservation and more, can’t push away this central uncanny, scary, painful reality: we emerge from self-metabolising, self-overwriting, downright creepy neural networks, bathed in a sea of their own strange chemicals. We live through them as we live, we fail with them when they fail, and we die with them when they die.

Indeed, as the philosopher Thomas Metzinger (who like me, draws heavily on cognitive science) has argued, there isn’t really any “self” in there anyway. Rather, the feeling of having a self is in some sense an illusion that is created in the process of our modeling the world.

Yes, this means people who are illiterate are better at facial recognition.

I am dogged by a sense that, while we think of our brain as a command post, it is really the most subservient body part.